The compute Challenge Driving AI Expenses

At the heart of artificial intelligence’s soaring costs lies the monumental compute challenge. The process of training advanced AI models demands vast computational power, which translates into meaningful energy consumption and specialized hardware investments.as models grow in complexity and scale,the number of calculations required escalates exponentially. This not only increases the time to train but also multiplies the expenses related to cloud infrastructure, GPUs, and cooling systems required to sustain continuous operation.

Key factors driving compute-related AI expenses:

- Hardware Specialization: Cutting-edge AI workloads frequently enough rely on high-performance GPUs and TPUs that come with premium pricing.

- Energy Demand: The energy consumed during extensive training phases adds up rapidly, especially as models are trained over weeks or months.

- Scale of Training Data: Larger datasets require more compute cycles to process, increasing total costs.

- Iteration and Experimentation: Model advancement isn’t linear; multiple training iterations to refine performance further amplify compute expenses.

| Compute Cost Driver | Expense Impact |

|---|---|

| Model Size (Parameters) | High |

| Training Duration | Moderate to High |

| Hardware Type | Very High |

| Energy Consumption | Moderate |

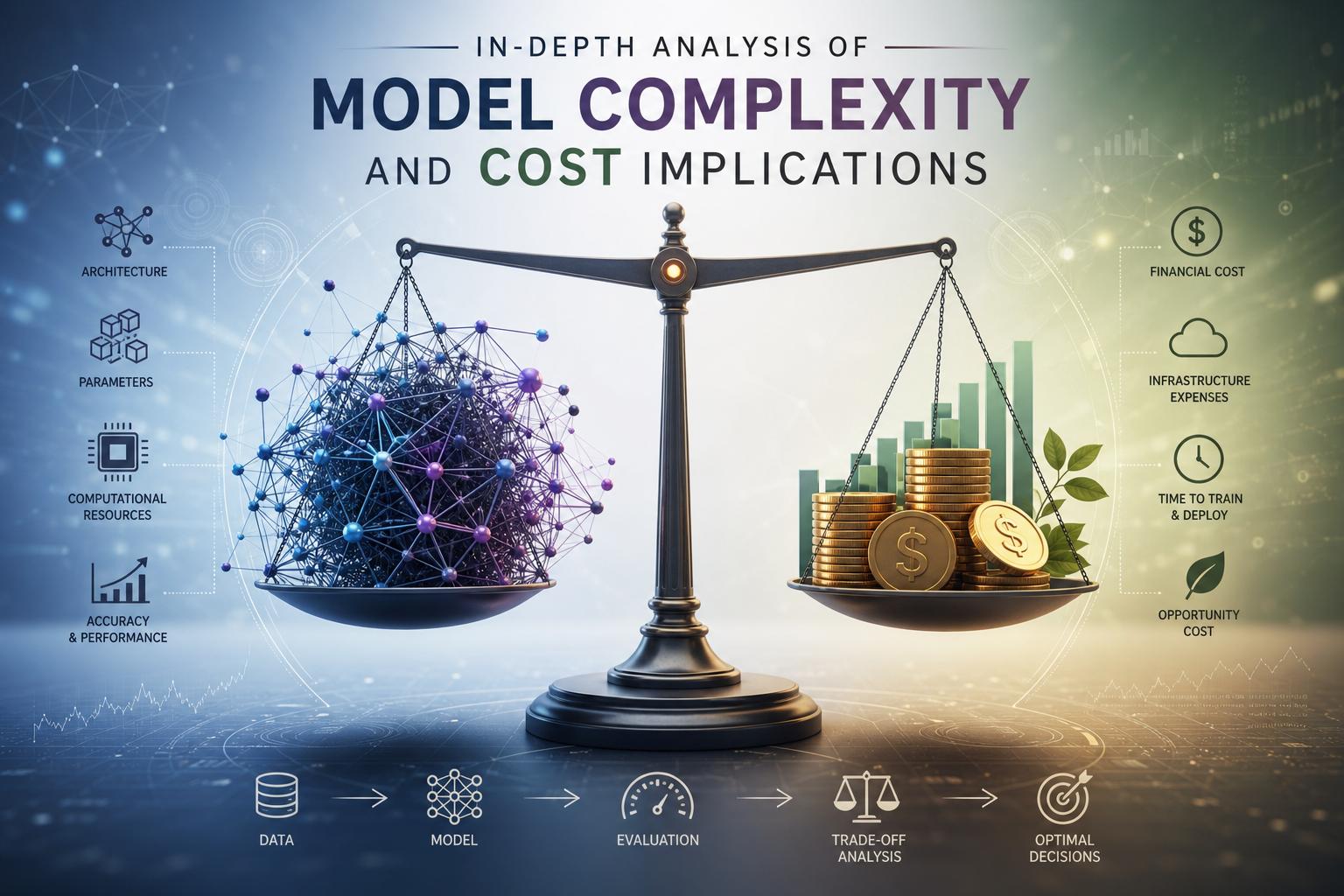

In-Depth Analysis of Model Complexity and Cost Implications

Modern AI systems derive thier capabilities from layers of complexity hidden behind seemingly effortless results.At the core, model architecture – including the depth, width, and connectivity of neural networks – directly inflates computational demands. Larger models with billions or trillions of parameters require exponentially more processing power to train and fine-tune, pushing data centers to operate at unprecedented energy scales. This complexity does not just inflate raw compute costs; it also necessitates sophisticated hardware such as GPUs, TPUs, or custom AI accelerators designed to efficiently manage vast parallel calculations that standard CPUs cannot handle.

Additionally, the cost implications extend beyond initial training to encompass ongoing usage and deployment challenges. Consider how inference – the process of running a trained model to generate outputs – varies dramatically based on model size and user traffic. The table below highlights typical cost components tied to model complexity and usage patterns within AI infrastructure:

| Cost Component | Impact factor | typical Range |

|---|---|---|

| Training Compute | Number of Parameters | millions to Trillions FLOPs |

| Inference Latency | Model Depth & Usage Frequency | Milliseconds to Seconds |

| Energy Consumption | Hardware Efficiency & Model Size | Kilowatt-hours per Day |

| Maintenance & Updates | Model Complexity & Dataset Scale | Ongoing Operational Costs |

- Trade-offs in design: Balancing build time with operational efficiency.

- Scalability challenges: Costs grow non-linearly with model and user scale.

- Optimization needs: Continuous efforts to reduce redundancy and improve inference speed.

Understanding these intricate dynamics is key to grasping why building and sustaining cutting-edge AI capabilities demands such substantial financial investment.

Evaluating the Impact of Usage Patterns on AI Investments

Understanding how different usage patterns shape the total cost of AI investments requires dissecting where and how computational resources are consumed. High-frequency query engines,as a notable example,demand consistent processing power,driving up expenses related to cloud compute time and energy. conversely, infrequent but complex requests may skew the cost distribution toward the underlying model complexity and memory demands.Key usage factors influencing AI expenses include:

- Query Volume: The sheer number of interactions scales consumption proportionally.

- Task Complexity: More sophisticated tasks require larger models and intense computations.

- Latency Requirements: Real-time applications necessitate optimized infrastructure, increasing operational costs.

- Data Throughput: High data intake amplifies storage and preprocessing investments.

This interplay can be summarized in the following table, illustrating the typical cost drivers for various usage profiles within AI deployments:

| Usage Pattern | Primary Cost Drivers | Impact on budget |

|---|---|---|

| Real-Time High Volume | Compute Time, Low Latency Infrastructure | Significant – continuous Resource Demand |

| Batch Processing | Model Complexity, Compute Efficiency | Moderate – Scheduled Resource Use |

| Exploratory Analytics | Data Throughput, Storage | Variable – Dependent on Data Size |

Strategic recommendations for Managing AI Cost Efficiency

To optimize AI-related expenditures, organizations must adopt a multi-dimensional strategy that balances computational resources, model complexity, and practical usage. Prioritizing efficient model architectures-such as pruning, quantization, or distillation-can substantially cut costs without substantially reducing effectiveness.Additionally,leveraging cloud providers’ spot instances or reserved capacity ensures compute resources are acquired at the most economical rates.Practical monitoring tools that track inference requests and training workloads can flag inefficiencies early, empowering teams to adjust resource allocations dynamically.

Cost-efficiency also depends on aligning AI deployments with business priorities. Targeted usage policies that limit unnecessary or redundant model queries can minimize wasted compute cycles. Consider this simple breakdown of cost impact factors:

| Factor | Cost Impact | Strategic Approach |

|---|---|---|

| Compute Power | High | Use scalable cloud instances, optimize workload batching |

| Model Size | Medium | Employ compression, switch to lighter models where feasible |

| Usage Volume | Variable | Implement rate limiting, prioritize key use cases |

By distributing AI workloads smartly and revisiting both model architecture and operational controls regularly, companies can ensure that their AI adoption remains enduring financially while maximizing value.