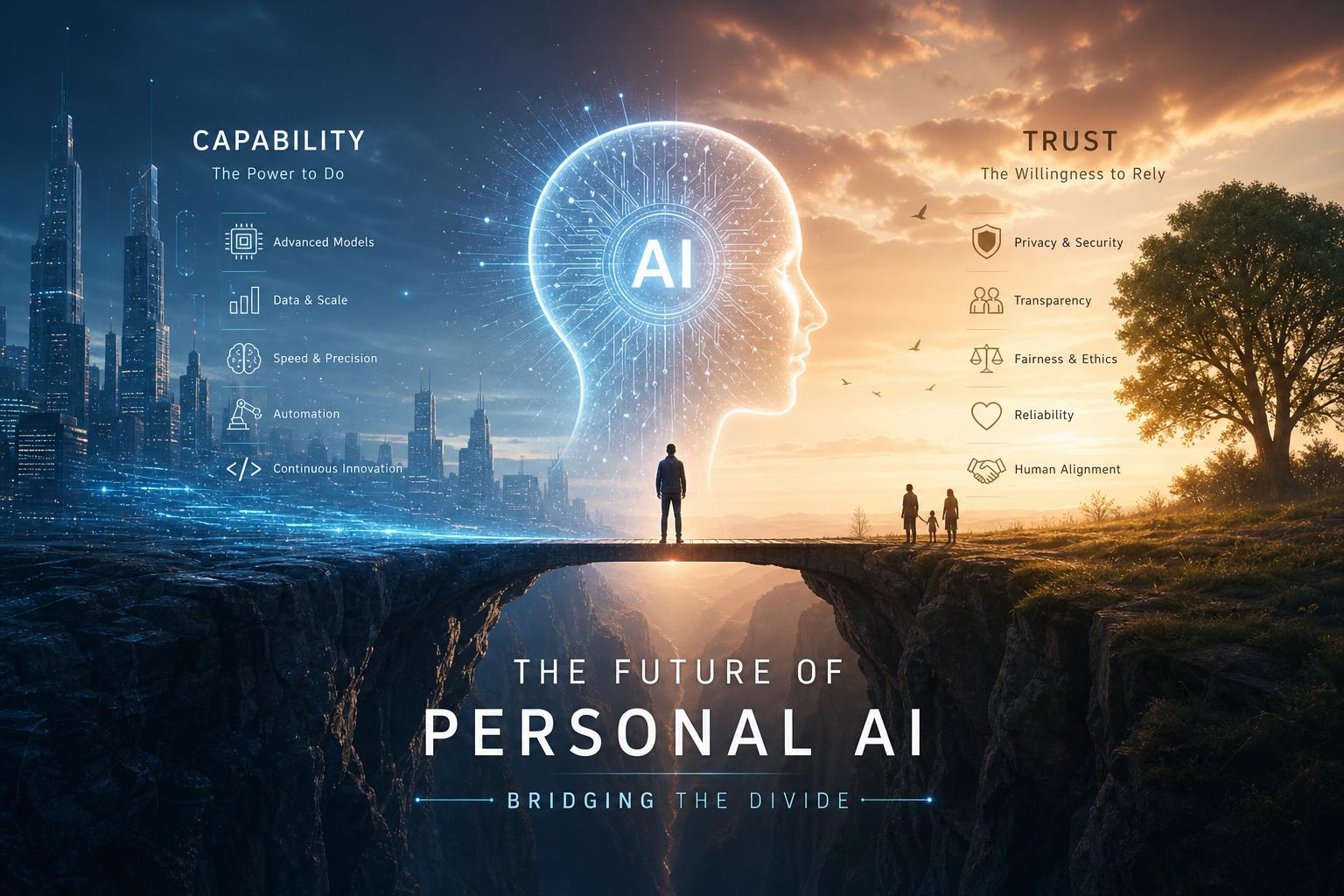

The Evolving Landscape of Personal AI Trustworthiness Assessing User Confidence and Technological Transparency Bridging the Capability Gap Through Ethical AI Design Empowering Users with Skills and Knowledge for Responsible AI Interaction

In today’s rapidly advancing AI landscape,the question of trustworthiness is more critical than ever. Users frequently face the challenge of discerning which AI systems provide reliable assistance and ethical interactions. Transparency in AI design plays a pivotal role in strengthening user confidence-clear,understandable explanations of AI decision-making processes reduce skepticism and build trust. This growing emphasis on transparency is not only a technical imperative but a social one, requiring AI developers and organizations to prioritize open interaction about capabilities and limitations. Concretely, users benefit from features such as:

- Real-time feedback on AI confidence levels

- Accessible logs of AI decisions and data sources

- Customizable privacy and ethical settings tailored to individual user values

Bridging the capability gap means empowering users with practical skills and knowledge to interact responsibly with AI tools. Ethical AI design is about more than just innovation; it must be inclusive and educational. Supportive frameworks and community-driven learning initiatives enable users to navigate AI’s evolving complexities securely and effectively. The following table summarizes key components differentiating trustworthy AI implementations from mere technical prowess:

| Aspect | Trustworthy AI | Conventional AI |

|---|---|---|

| Transparency | Clear, user-pleasant explanations | Opaque decision processes |

| User Empowerment | Ongoing education and control | Limited user insight or control |

| Ethical Framework | Integrated fairness and privacy safeguards | Minimal ethical considerations |